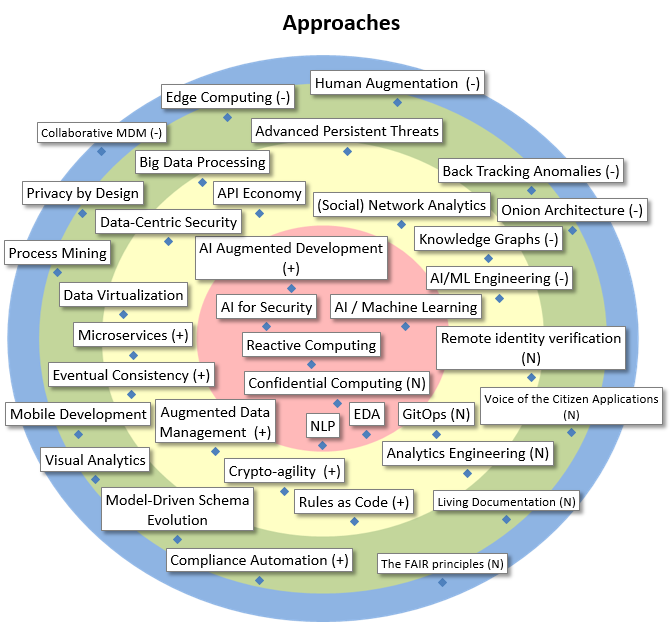

| AI / Machine Learning |

AI is the broader concept of machines acting in a way that we would consider “smart”. Machine Learning is a form of AI based on giving machines access to data and let them learn for themselves. Includes neural networks, deep learning, language processing. A possible application is fraud detection. |

| EDA |

An Event Driven Architecture can offer many advantages over more traditional approaches. Events and Asynchronous communication can make a system much more responsive and efficient. Moreover, the event model often better resembles the actual business data coming in. |

| NLP |

Natural Language Processing, part of AI, includes techniques to distill information from unstructured textual data, with the aim of using that information inside analytics algorithms. Used for text mining, sentiment analysis, entity recognition, natural language generation (NLG)… |

| AI for Security |

Non-traditional methods improving analysis methods in the security technology of systems and applications (e.g. user behaviour analytics). |

| Reactive Computing |

The flow of (incoming) data, and not an application’s (or cpu’s) regular control flow, govern its architecture and runtime. This a new paradigm, sometimes even driven by new hardware, and opposes the traditional way of working with fluxes. Also known as Dataflow Architecture and related to EDA. |

| AI Augmented Development |

Use of AI and NLP in the development environment: debugging, testing (mutation, fuzzing), generation of code/documentation, augmented coding, recommendations for refactoring, … |

| Confidential Computing |

Confidential computing allows an entity to do computations on data without having access to the data itself. This can be realized in a centralized way with with homomorphic encryption or trusted exectution environments or in a decentralized way with secure multiparty computation. |

| AI/ML Engineering |

In machine learning ‘by hand’, a lot of time is lost between training a model and putting it in production, to then wait for feedback for potential retraining. CD4ML (continuous delivery for ML) attempts to automate this process. |

| Knowledge Graphs |

Knowledge Graphs relate entities in a meaningful graph structure to facilitate various processes from information retrieval to business analytics. Knowledge graphs typically integrate data from heterogeneous sources such as databases, documents, and even human input. Makes part of AI. |

| (Social) Network Analytics |

Social network analytics (SNA) is the process of investigating social structures (i.e., relations between people and other entities such as companies, addresses, …) by the use of network and graph theory, as well as concepts from sociology. |

| Advanced Persistent Threats |

Type of threats used to perpetrate a long-term computer attack on a well-defined target. Closely resembles industrial espionage techniques. |

| API Economy |

API’s, to connect services within and across multiple systems, or even to 3rd parties, are becoming prevalent and push a new business model, centered around the integration of readily available data and services. They also help with loose coupling between components. |

| Big Data Processing |

Big data analyics solutions require architecture, which 1) has the calculations executed where data is stored, 2) spreads data and calculations over several nodes, and 3) uses a data warehouse architecture that makes all types of data available for analytical tools in a transparent way. |

| Data-Centric Security |

Approach to protect sensitive data uniquely and centrally, regardless of format or location (using e.g. data anonymization or tokenization technologies in conjunction with centralized policies and governance). |

| Data Virtualization |

Methods and tools to access databases with heterogeneous models and to facilitate access for users using a virtual logical view. |

| Eventual Consistency |

A general way to evolve systems away from too restricitive ACID principles. Using this, and pushing it through on a business level, are the only way to keep systems evolving towards a more distributed, scalable, flexible, and maintainable lifecycle. |

| Microservices |

Independently maintainable and deployable services, which are kept very small (hence, ‘micro-‘), make an application, or even large groups of related systems, much more flexibly scalable, and provide functional agility, which allows a system to rapidly support new business opportunities. |

| Augmented Data Management |

Through the addition of AI and machine learning, data management manual tasks will be reduced considerably. Different products will be ‘augmented’, such as data quality, metadata management, master data management, data integration and database management systems. |

| Crypto-agility |

Crypto-agility allows an information security system to switch to alternative cryptographic primitives and algorithms without making significant changes to the system’s infrastructure. Crypto-agility facilitates system upgrades and evolution. |

| Rules as Code |

Concept from LegalTech, aiming to make policies both human-readable and machine-readable at the same time, in order to facilitate implementation and arrive at a more consistent and equitable application of the law. |

| Analytics Engineering |

Analytics engineers provide clean data sets to end users, modeling data in a way that empowers end users to answer their own questions. Focus on transforming, testing, deploying, and documenting data. Tools: dbt, snowflake, stitch, fivetran, looker, mode, redash, columnar DBs |

| GitOps |

Best practices coming from DevOps, applied to Operations. This, for instance, means, that all configuration is specified in files that can be maintained using version control and that are machine readable by tools to automate as many things as possible. |

| Remote identity verification |

Remote identity verification comprises the process and the tools to remotely verify someone’s identity, without the need for the person to physically present him or herself to an authority. |

| Onion Architecture |

Also ‘Hexagonal Architecture’: a set of architectural principles making the domain model code central to everything and dependant on no other code or framework. Other aspects of the program code can be dependant on the domain code. Gained a lot of popularity in the community recently |

| Back Tracking Anomalies |

Method to detect causes of data quality problems in data flows between information systems and to improve them structurally. ROI is Important and facilitates a win-win approach between institutions. To monitor the anomalies and transactions an extension to the existing DBMS has to be built. |

| Human Augmentation |

Enhancement of human capabilities using technology and science. Can be very futuristic (e.g. brain implants) but intelligent glasses could be a realistic physical augmentation. Cognitive augmentation (a human’s ability to think and make better decisions) will be made possible thanks to AI. |

| Edge Computing |

Information processing and content collection and delivery are placed closer to the endpoints to fix high WAN costs and unacceptable latency of the cloud. Also in context of AI solutions, edge computing becomes more relevant (ref. tinyML) |

| Privacy by Design |

Privacy by design calls for privacy to be taken into account throughout the whole engineering process. The European GDPR regulation incorporates privacy by design. An example of an existing methodology is LINDDUN. |

| Process Mining |

Includes automated process discovery (extracting process models from an event log from an information system), and offers also possibilities to monitor, check and improve processes. Often used in preparation of RPA and other business process initiatives (context digital transformation). |

| Mobile Development |

Set of techniques, tools and platforms to develop web based and platform-specific mobile applications. |

| Visual Analytics |

Methodology and enabling tools allowing to combine data visualization and analytics. Allows rapidly exploring, analyzing, and forecasting data. This helps modeling in advanced analytics, and to make modern, interactive, self-service BI applications. |

| Model-Driven Schema Evolution |

New technologies for the semi-automatic maintenance of conceptual, logical and physical database schemas over time. |

| Compliance Automation |

The (semi-)automation of compliance and compliance verification processes which currently rely on manual input. This requires one to formalize, to the extent possible, regulation and policies that trigger actions. |

| Living Documentation |

Living documentation actively co-evolves with code, making it constantly up-to-date without requiring separate maintenance. it lives with the code and can be automatically used by tools to generate publishable specifications. An example can be found in some forms of annotations. |

| Voice of the Citizen Applications |

Contains a number of approaches to capture and analyze explicit or non-explicit feedback from users, in order to improve the systems and remove frictions. |

| Collaborative MDM |

In Master Data Management, collaborative and organized management of anomalies stemming from distributed authentic sources, by their official owners. |

| The FAIR principles |

The FAIR principles are a set of guidelines developed by the scientific community to make it easier for machine and human to share and reuse research data. The four main principles are findability, accessibility, interoperability and reusability. |